A Misunderstood Superpower

“Compilers? Is that not something only old professors obsess over?”

That is the stereotype. People usually think of compilers as something that belongs in the dusty corners of computer science with no use except in academia or rarely used low-level systems work.

But that’s not what I saw as a developer navigating modern software. I realized that compilers aren’t just old relics, but one of the most powerful tools we have. They do not just turn your code into machine instructions — they enable performance, safety, portability, and more.

So here is the question:

What if compilers are more important today than ever before, not just behind the scenes but in the heart of everyday tools that we rely on?

I Saw the Sign

At some point, almost everyone who studies computer science dreams of building their own programming language, including me. However, for most of us, it remains a dream — something we find cool in theory but is too complex to try.

I never set out to become a compiler engineer. I thought that such stuff was reserved for language theorists with PhDs and whiteboards filled with Greek letters.

But curiosity always kills the cat. One day, I mentioned this dream to a friend, expecting a shrug or a laugh. Instead, he lit up and said: “You should check out this book — Crafting Interpreters by Robert Nystrom.” I had never heard of it. But that recommendation was a spark.

The moment I started reading, something clicked. The writing was clear, and the code was approachable. What started as late-night reading quickly turned into hands-on experimentation. I wasn’t just reading — I was building. A tokenizer. A parser. A virtual machine. Each step felt like uncovering a hidden layer of how programming works.

Was it overwhelming sometimes? Definitely. Bugs drove me crazy, some concepts took days to make sense, and frustrating moments almost made me give up. But I found it strangely addictive to write code that transforms others’ code.

I wasn’t aiming to create the next Python. I just wanted to learn. Along the way, I discovered how compilers guide your thinking, balance elegance with performance, and I came to appreciate the invisible machinery that runs the software.

Compilers Power the AI Boom

To get the answer, take a step back and look at where modern computing is headed — especially with AI — and you will see that compilers are everywhere.

Every time someone runs an AI model, whether it’s an LLM, image classifier, or a recommendation engine, there’s a compiler working in the background to make it fast, efficient, and hardware-aware.

NVCC: The Compiler Foundations of Deep Learning

Take NVCC (NVIDIA’s CUDA Compiler) as an example. It’s been a cornerstone of high-performance computing since the early 2010s, revolutionizing GPU programming and making it possible to write code that executes across thousands of parallel threads.

Before the AI boom, CUDA was mainly used in scientific computing. But once researchers discovered that neural networks trained on GPUs could drastically outperform CPUs — thanks to their ability to handle matrix operations in parallel — CUDA became the bedrock of modern deep learning.

Frameworks like TensorFlow, PyTorch, and Caffe started leaning heavily on CUDA to accelerate training. But underneath every model training script, there’s a compiler like NVCC converting high-level tensor operations into optimized GPU kernels.

As models got more complex and hardware became more diverse, manually writing kernel code for every ML operation became unsustainable. That’s when the idea of machine learning compilers started to take off.

The Rise of Machine Learning Compilers

One of the major turning points was TVM (Tensor Virtual Machine), an open-source ML compiler developed at the University of Washington and later adopted by Apache.

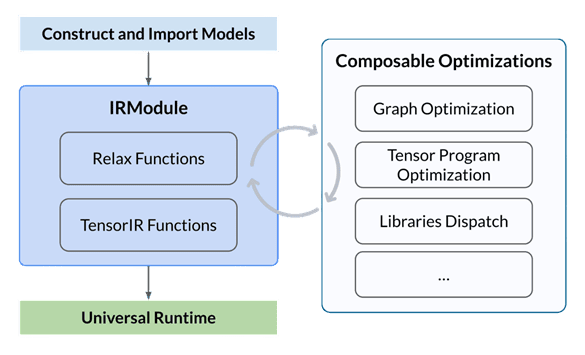

TVM works this way:

- You construct or import a pre-trained model (e.g., from PyTorch or ONNX)

- TVM transforms it into a low-level intermediate representation (IR)

- It performs optimization transformations, tensor program optimization, and library dispatching

- It builds the optimized model and executes it on the target device — CPU, GPU, or custom accelerator

It’s Not Just TVM: A Quiet Revolution in ML Compilation

While TVM was a big step forward, it wasn’t the only one.

MLIR is an open-source compiler infrastructure from Google that helps developers build reusable compiler components, making it easier to support new hardware or frameworks without starting from scratch. Today it powers TensorFlow and ONNX.

Building on that, Google introduced OpenXLA, which combines MLIR with their earlier compiler XLA.

XLA was great at speeding up TensorFlow by turning model operations into fast, low-level code for CPUs, GPUs, and TPUs — but it was tightly locked into TensorFlow.

OpenXLA changes that. It’s modular, open-source, and works across JAX, PyTorch, and more. It lets models run efficiently on everything from cloud TPUs to local GPUs to custom edge devices, giving developers both performance and flexibility.

The Takeaway

Modern AI needs hardware. Hardware needs compilers. And compilers need engineers — not just to maintain the magic, but to push it forward.

No One Wants to Be a Compiler Engineer

In most CS programs, students take one compiler course — often filled with theory, automata, and parsing algorithms — then promptly move on. When choosing a career path, the vast majority gravitate toward front-end, back-end, ML, or data science. Few ever look back.

And that might become a serious problem.

Modern technologies — the apps we use and the AI models we rely on — heavily depend on compiler infrastructure. Frameworks like TensorFlow, PyTorch, and even WebAssembly are all built on top of sophisticated compilers. They’re just hidden behind clean APIs.

Under the hood, it’s compilers all the way — transforming high-level instructions into fast, optimized code tailored for CPUs, GPUs, TPUs, and custom chips.

Very few people understand how any of that works. The number of engineers who can build these systems is small, and the demand is growing. Hundreds of open positions at companies building AI frameworks, browsers, cloud runtimes, game engines, and hardware accelerators — many struggling to fill these roles.

Compilers used to be a niche topic. Now they’re everywhere.

Why You Should Build a Language (Even a Tiny One)

You don’t need to be a genius to build a programming language. Building even a tiny toy language can be one of the most rewarding learning experiences in CS.

When you build a language, you’re not just writing syntax rules — you’re diving into the core ideas that shape all of software:

- How parsers and grammars work

- How semantics are designed

- How code is ultimately translated into something machines can run

It forces you to see the big picture — not just how code is written, but how it runs, how it’s optimized, and what’s happening behind the scenes.

References

- The Apache Software Foundation. Apache TVM Documentation. https://tvm.apache.org/docs/index.html

- Wikipedia. MLIR (software). https://en.wikipedia.org/wiki/MLIR_(software)

- Google Open-Source Blog. OpenXLA is available now. https://opensource.googleblog.com/2023/03/openxla-is-ready-to-accelerate-and-simplify-ml-development.html

- Robert Nystrom. Crafting Interpreters. https://craftinginterpreters.com/